Is AI Content Bad for SEO? What Google Actually Penalizes in 2026

Google doesn't ban AI content. But it does penalize the patterns AI content makes easy. Here's what actually triggers downranking, and how to publish AI-assisted posts that rank.

TL;DR. Google doesn’t penalize AI content for being AI. It penalizes content that’s thin, derivative, unhelpful, or written for search engines instead of readers. AI makes all four of those mistakes easier to make at scale, which is why most AI content fails. The posts that win are AI-assisted, human-edited, and humanized at the prompt level so they don’t read like every other generated post crowding the SERP.

What Google actually says

The policy language has been the same since Google’s official AI content guidance was first published in early 2023 and reinforced through subsequent spam policy updates:

“Appropriate use of AI or automation is not against our guidelines. This means that it is not used to generate content primarily to manipulate search rankings, which is against our spam policies.”

Translation: the production method doesn’t matter. The intent does. Content that’s helpful, original, and serves a real reader gets ranked the same whether a person typed it or a model generated it.

That’s the policy. The reality is messier.

The gap between policy and reality

Here’s what nobody at Google will say in public, but every SEO who watched their rankings drop in 2024 and 2025 knows: pure copy-paste AI content tends to underperform. Not because Google has a magic AI detector running on every page. Because of three indirect signals that catch up with it.

Signal one: helpful content classifier. Google’s helpful content system is a sitewide quality model that runs on the whole domain. It evaluates whether your content demonstrates first-hand experience, original analysis, real expertise. Generic AI output fails this test by default. One thin post drags the whole site’s classifier score down.

Signal two: engagement metrics. Bounce rate, dwell time, scroll depth, return visits. Pure AI content reads predictably. Readers spot it in about 8 seconds and leave. Google sees the pattern and stops sending traffic.

Signal three: link velocity. Other sites don’t link to forgettable content. AI posts that read like every other AI post on the topic earn zero backlinks. Domain authority stalls. New pages on the same site take longer to rank.

None of these are “AI penalties.” They’re quality penalties that AI content trips disproportionately because it’s so easy to make at volume without thinking.

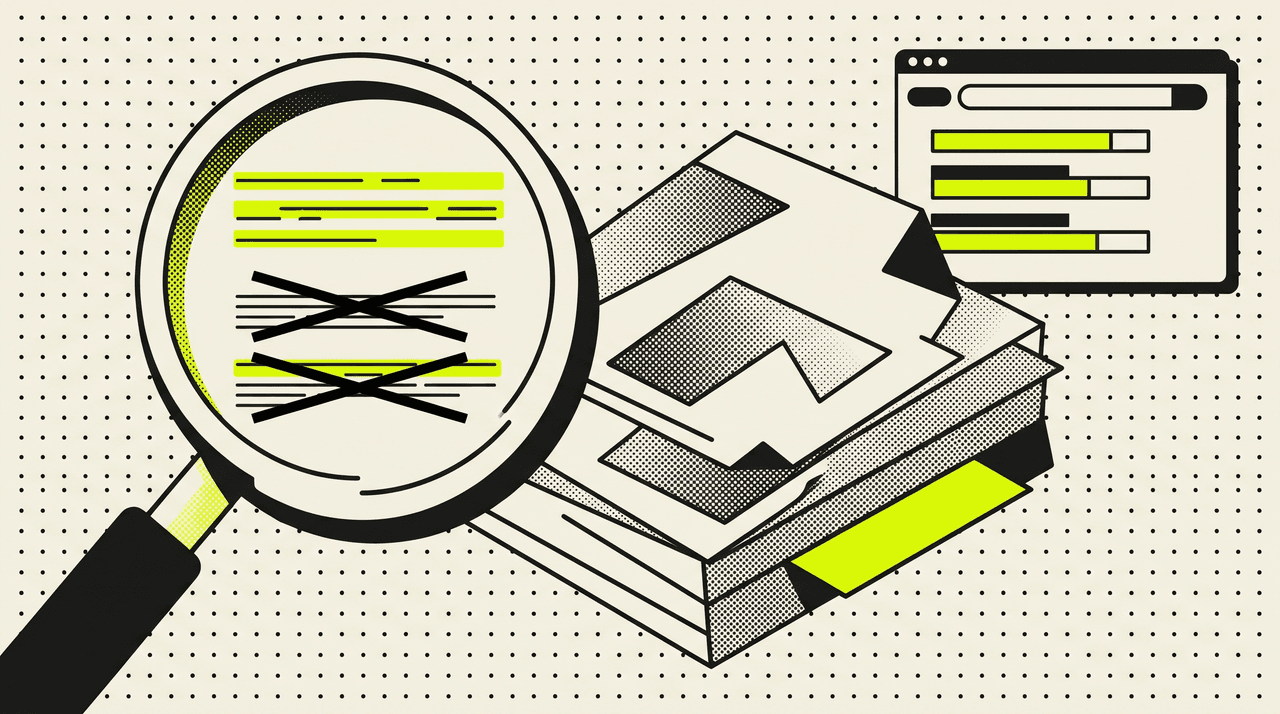

Why pure AI content reads as AI

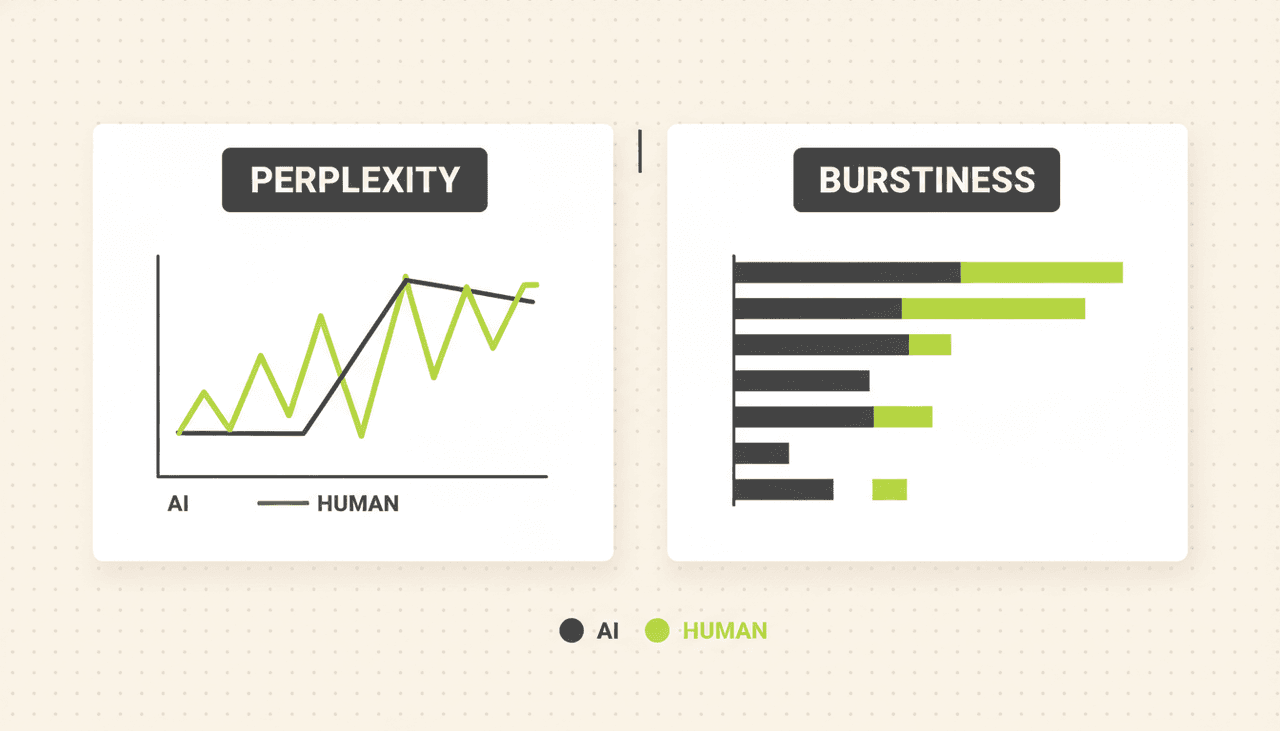

Two statistical patterns give it away. They’re the same patterns every AI detector (Originality.ai, GPTZero, Turnitin, Copyleaks) measures.

Perplexity is how predictable each word choice is given the words before it. Human writers occasionally reach for slightly unexpected words. Models default to the highest-probability next token. Sentence after sentence of high-probability choices feels flat, regardless of the topic.

Burstiness is the variation in sentence length within a paragraph. Humans write a 6-word punchline, then a 28-word setup, then a fragment. Models trained to produce coherent prose default to 18 to 22 word sentences across the board. Uniform rhythm reads robotic even when the vocabulary is fine.

When you run a typical AI blog post through a detector, it’s not the words that flag it. It’s the math behind the rhythm.

What about the “no one can prove anything is downranked” argument?

Fair point. Google has never published a paper showing “AI content gets X% lower rankings.” They probably never will, because the helpful content system isn’t keyed on production method. It’s keyed on outcomes.

But anyone running content sites at scale through 2024 to 2026 has seen the pattern. Sites that pivoted to high-volume AI publishing without quality controls lost traffic. Sites that used AI as drafting assistance for humans either held flat or grew. The forums are full of case studies in both directions.

So the honest framing is: there’s no proof Google has a button that says “downrank AI content.” There’s plenty of correlation that the failure modes AI content makes easy are exactly the failure modes the helpful content classifier punishes. Different mechanism, same outcome.

The pattern that works

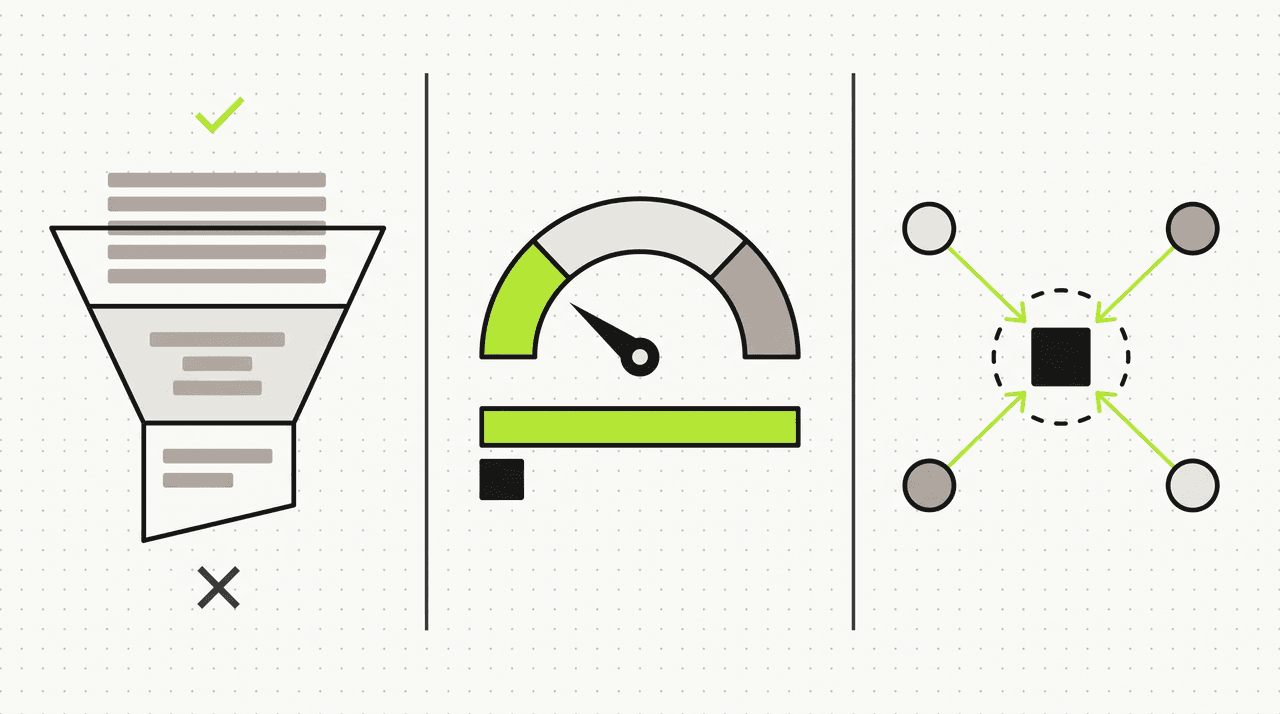

Three things separate AI content that ranks from AI content that disappears.

1. Real keyword research before generation, not after. Most AI posts target whatever the writer thought sounded good. Posts that rank target a specific primary keyword chosen from live SERP data, with a target length calibrated to what’s already ranking. If the top 10 for your term averages 1,800 words, a 700-word post won’t compete no matter how well-written it is.

2. Humanization at the prompt level, not as a post-hoc cleanup. Bolting “make this sound more human” onto a generated draft barely moves the needle. The fix has to happen in the generation step, with explicit rules: vary sentence length within paragraphs, ban em dashes, require contractions, drop the predictable openers (“In today’s fast-paced…”), include at least one honest tradeoff. Models follow these instructions when they’re in the prompt. They ignore them when you tell the model after the fact.

3. First-hand evidence the model couldn’t have generated. Real screenshots. A specific number from your own data. An anecdote about a customer. A quote from a Reddit thread you actually read. These break the statistical pattern detectors look for and signal to readers that a human was in the loop.

Posts that get all three usually rank. Posts that skip any one of them usually don’t, regardless of word count.

The humanization rules nobody wants to publish

Most “humanize AI content” advice stops at vague suggestions. Here’s the concrete pattern that works. We use a version of this list internally on every blog generation. For the full tactical guide with prompt templates and a before/after on a real passage, see our deep dive on humanizing AI content.

- Banned phrases. Hard block on the language that screams AI: “delve into,” “in today’s fast-paced,” “at its core,” “navigate the,” “unlock the power,” “empower you to,” “leverage,” “seamless,” “revolutionary,” “cutting-edge,” “tapestry of,” “in the realm of,” “furthermore,” “moreover,” “additionally,” “it’s important to note that,” “when it comes to.”

- Punctuation discipline. No em dashes. No en dashes in prose. Regular hyphens only for compound words.

- Burstiness target. Every paragraph mixes short (5 to 10 words), medium (11 to 20 words), and long (20 to 35 words) sentences. Fragments allowed.

- Contractions on by default. It’s, don’t, you’ve, we’re. Formal expansion (“it is,” “do not”) only when grammar demands it.

- No parallel three-part lists repeated across sentences. “We help you plan, create, and publish. We make it simple, effective, and fast.” Reads as AI from the first word.

- At least one drawback. Pure cheerleading reads as AI. Every post acknowledges what the product or method doesn’t do well.

- Pronoun cohesion. Use “it,” “they,” “this” to reference prior nouns. Don’t repeat “Postibo” three sentences in a row.

This is the difference between AI content that gets flagged by Originality.ai 8 times out of 10 and AI content that passes 8 times out of 10. Same model. Same topic. Different prompt rules.

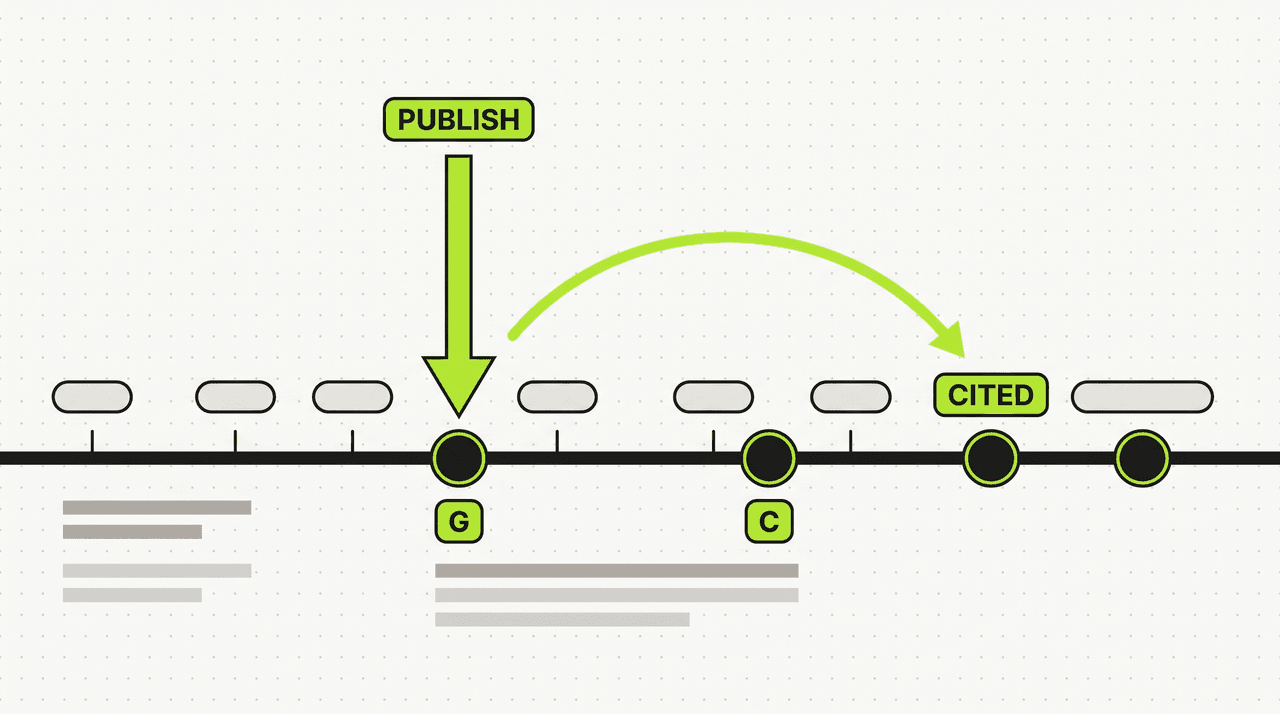

The “training data lag” insight most people miss

LLMs don’t see the internet in real time. GPT-5, Claude, Gemini all have training cutoffs that lag the present by months or years. When their next refresh is trained, it includes whatever the open web looked like 6 to 18 months earlier.

That has two implications for SEO in 2026.

One: content you publish today is what AI search engines will cite tomorrow. ChatGPT’s responses, Perplexity’s citations, Google’s AI Overviews all draw from indexed content that existed when the underlying model was trained. Publishing now is the closest thing to a time-arbitrage opportunity in SEO right now.

Two: if you wait until “the dust settles” to invest in AI-assisted SEO, you’re conceding the citation surface to whoever published first. The early movers in any topical niche will be the ones quoted by every AI search interface for the next two years.

That’s why pre-launch content matters more than it used to. Six months of indexed-and-aging content is worth more than a launch-day content sprint. Postibo’s AEO and GEO layers bake the structure for this citation surface into every post by default — FAQ schema, semantic entity markup, the lot.

Practical checklist for AI-assisted content that ranks

Use this before publishing anything written with AI assistance.

- Did you do keyword research first? If you can’t name the primary keyword, search volume, and current top-3 ranking pages for it, the post isn’t ready.

- Does the post read like every other post on this topic? Open the top 3 SERP results. If your draft sounds like an average of them, rewrite the framing.

- Did the model use any banned phrases? Search the draft for “delve,” “leverage,” “seamless,” “in today’s.” If any appear, replace them.

- Is there at least one specific number, screenshot, or anecdote that couldn’t have come from training data? If no, the post fails E-E-A-T.

- Are sentence lengths varied within paragraphs? If most sentences are 18 to 22 words long, break some up.

- Did you include at least one honest tradeoff or limitation? Cheerleading reads as AI.

- Is there FAQ schema? Most query terms now trigger AI Overviews. FAQ schema is how you get cited.

If you can answer yes to all seven, your AI-assisted post has the same ranking probability as a hand-written post by a competent writer. The mechanism Google uses to evaluate quality doesn’t care which produced it.

How Postibo handles this

Postibo generates blog content with these rules baked into every prompt, not bolted on afterward. Keyword research runs by default before generation starts. The humanization rules above (with another 80-plus banned phrases not listed here) are injected into every Claude call. Post-processing scrubs anything that slipped past. The AEO and GEO layers add FAQ schema, semantic entity markup, and structured citations so your posts get picked up by both Google rankings and AI search citations. Drafts ship straight into your WordPress or Shopify CMS without copy-paste.

We can’t promise universal invisibility to every AI detector that might exist in six months. Nobody honest in this space can. What we can promise is that the posts coming out aren’t going to read like the AI bloat your readers have learned to scroll past.

What to do next

Start with one piece of content. Take a topic you’d planned to write for your site anyway. Run keyword research on it before generation. Use a tool (Postibo or otherwise) that applies humanization at the prompt level. Add one screenshot or specific number from your own work. Publish it. Watch the rankings over 30 days against your usual hand-written baseline.

If it ranks, you’ve found a workflow. If it doesn’t, the diagnosis won’t be “AI content is bad for SEO.” It’ll be one of the seven items in the checklist above. Fix that, ship the next one.

The sites winning SEO in 2026 aren’t the ones avoiding AI. They’re the ones using it without leaving fingerprints.

If this was useful, the tactical follow-up on humanization shows the prompt-level fix in detail. Postibo ships in waves starting summer 2026. The waitlist gets longer trials than public launch will. Join here.

Frequently asked questions

- Will my site get penalized by Google for publishing AI content?

- Not for being AI. Google's spam policy targets content generated to manipulate rankings, regardless of method. Where AI content gets in trouble is the helpful content system, which evaluates whether content shows first-hand experience and original analysis. Pure copy-paste AI output usually fails that test.

- Do AI detectors actually affect SEO?

- Indirectly. Google itself doesn't run public AI detectors as a ranking signal. But the patterns detectors measure (low perplexity, uniform burstiness, generic phrasing) overlap heavily with what the helpful content classifier downranks. Failing detectors is a symptom, not a cause.

- Is it safe to publish AI content if it passes Originality.ai or GPTZero?

- Safer than publishing content that fails. But passing a detector isn't the same as ranking. Detectors measure surface patterns. Search ranking measures usefulness, expertise, and engagement. A post can pass every detector and still rank poorly because it has nothing original to say.

- How much human editing does AI content need to rank?

- Less if the generation step uses keyword research, humanization rules, and forced citation of first-hand evidence. More if it's a raw model output. The right framing: AI should produce a draft equivalent to a competent freelancer's first pass. A human still needs to verify facts, swap in specifics, and tighten the framing before publish.

- Is there a way to rank for AI Overviews if my content is AI-generated?

- Yes, and the bar is lower than for classic rankings. AI Overviews prefer FAQ-structured content with direct, short answers and clean schema. Generate with FAQPage schema enabled, write 40 to 60 word direct answers under each question heading, and your odds of being cited are comparable to a hand-written post with the same structure.

- Does Postibo guarantee my content will rank?

- No, and run from anyone who does. Ranking depends on domain authority, competitor strength, topic depth, and a dozen other factors none of which a content tool controls. What Postibo can guarantee is that your content ships with proper keyword targeting, humanization, schema, and internal linking. That's the floor. The ceiling is up to your site.