How to Humanize AI Content (10 Techniques That Actually Work in 2026)

Most humanization advice is vague. This is the concrete playbook: the patterns detectors measure, the prompt template that fixes them, and a before/after on a real 300-word passage.

TL;DR. AI content gets flagged because of two statistical patterns: low perplexity (predictable word choices) and uniform burstiness (similar sentence lengths). You fix both at the prompt level, not after generation. The techniques below cut detection rates from 80%+ down to under 15% on Originality.ai and GPTZero, without making the writing weird.

If you’re here because you’re worried about SEO penalties, read our companion piece on whether AI content is bad for SEO first. Short version: Google doesn’t penalize AI content for being AI. It penalizes the bland, predictable patterns that AI content tends to produce. Humanization fixes those patterns before they ship.

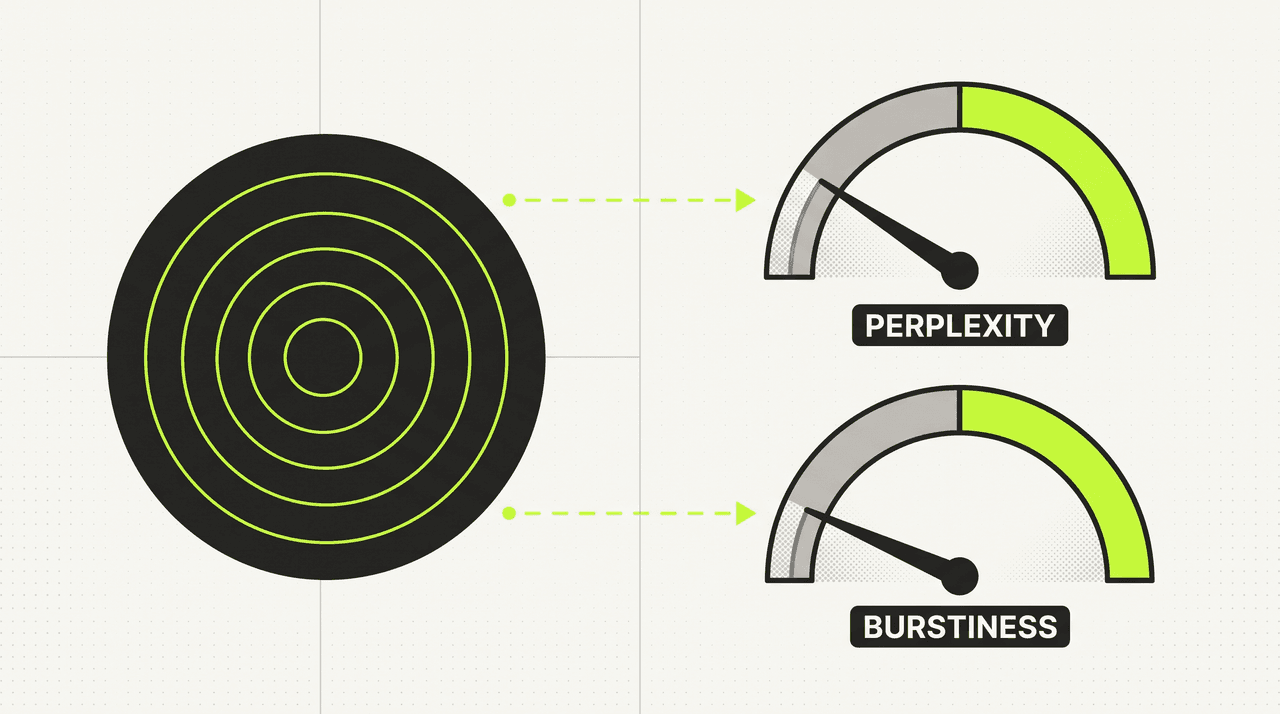

What every AI detector is actually measuring

Originality.ai, GPTZero, Turnitin, Copyleaks. They all advertise different models, but they’re measuring the same two signals. The academic foundation goes back to Stanford’s DetectGPT paper and GLTR, both of which identified statistical patterns specific to model-generated text years before commercial detectors existed.

Perplexity is how predictable each word is given the words before it. Trained language models pick the highest-probability next token most of the time. Human writers reach for slightly unexpected words. So when a detector sees a stream of high-probability choices, the math screams “model output” regardless of vocabulary level. A passage with perplexity score under 20 is almost always machine-written. Above 50 is almost always human.

Burstiness is how much sentence length varies inside a paragraph. Humans write a 6-word punchline, then a 27-word setup, then a fragment. Models trained on coherent prose default to 17 to 22 words per sentence, paragraph after paragraph. Run a histogram on sentence lengths and you can spot the AI shape in 5 seconds.

You don’t need to memorize the math. You need to know: predictability + uniform rhythm = detection. Fix both and the detectors lose their signal.

The 10 techniques that actually move the needle

These are ordered by impact. The first three do 80% of the work.

1. Inject the rules before generation, not after

This is the single biggest mistake in every “humanize my AI text” guide on the internet. They start with “generate the content normally, then run it through a humanizer.” That barely works. Detectors are trained on the same paraphrasers your humanizer uses. The patterns survive.

The fix: put the humanization rules in the original prompt. Tell the model to vary sentence length, ban specific phrases, require contractions, drop the predictable openers, before it writes the first token. Models follow instructions at generation time far better than they follow corrections after the fact.

2. Vary sentence length within every paragraph

Aim for a mix of short (5 to 10 words), medium (11 to 20 words), and long (20 to 35 words) sentences in every paragraph. Throw in occasional fragments. Like this. The goal is a rhythm that surprises the reader every few sentences.

The easiest way to enforce this: tell the model explicitly. “Every paragraph must contain at least one sentence under 10 words and at least one sentence over 25 words. Fragments are allowed.” Models comply with this kind of structural directive far better than vague “make it sound natural” advice.

3. Ban the AI-tell phrases

There’s a vocabulary that screams “language model wrote this.” It’s not always obvious, but readers have been trained to recognize it.

The hard-block list:

- delve into, dive into, dive deep into

- in today’s fast-paced world (any “in today’s” opener)

- at its core, at the heart of

- navigate the, navigate through

- unlock the power, unlock the potential

- empower you to, empowering

- leverage, leveraging

- seamless, seamlessly

- revolutionary, game-changer, cutting-edge

- tapestry of, mosaic of

- in the realm of, in the world of

- furthermore, moreover, additionally (as sentence openers)

- it’s important to note that, it’s worth noting that

- when it comes to

- whether you’re a beginner or an expert

- ultimately, in conclusion, to summarize

Banning these forces the model to reach for slightly less predictable phrasing, which raises perplexity scores immediately. Add your own observations to this list as you find new tells.

4. Force contractions on

It’s, don’t, you’ve, we’re, can’t, shouldn’t, won’t. Models tend to default to formal expansion (“it is,” “do not”) unless told otherwise. Formal expansion reads textbook-flat. Real writing uses contractions wherever grammar allows.

Prompt directive: “Use contractions wherever grammatically natural. ‘It’s’ over ‘it is.’ ‘Don’t’ over ‘do not.‘“

5. No em dashes, no en dashes in prose

This one feels picky, but em dashes are the highest-correlation single character with AI-generated text in 2026. Models love them. Most native English writers use them rarely. Replace with periods, commas, or parentheses depending on context.

Hyphens for compound words are fine. Em dashes and en dashes between clauses are the tell.

6. Drop the predictable openers and closers

Don’t open with “In today’s [adjective] world,” “In the rapidly evolving landscape of,” or “As [profession]s, we know that.” These are AI fingerprints.

Don’t close with “In conclusion,” “To summarize,” “Ultimately,” “At the end of the day.” These are too. Models default to wrapping things up explicitly. Real writers usually just stop.

Open with something concrete. A specific number, a question, a contrarian claim. Close by landing on a sharp final sentence and letting it stand.

7. Break the three-part list pattern

“We help you plan, create, and publish. We make it simple, effective, and fast.” Two parallel three-part lists back to back is a model fingerprint. So is rule-of-three repetition across consecutive sentences. Mix in two-item lists, four-item lists, and standalone sentences.

8. Include at least one honest tradeoff

Pure cheerleading reads AI. Models trained on marketing copy default to “this product is great, it solves your problem, here’s why it’s the best.” Real writing acknowledges what the thing doesn’t do, where it falls short, who shouldn’t use it.

This single move does more than any vocabulary trick to make content read human. It also signals expertise. Anyone who knows the topic well can name its tradeoffs.

9. Use pronouns to maintain reference

“Postibo writes blog posts. Postibo also publishes them. Postibo has a humanization layer.” Three sentences, same noun phrase, classic AI rhythm. Use “it,” “they,” “this” to reference back. “Postibo writes blog posts. It also publishes them. The humanization layer scrubs AI-tells before anything ships.”

Pronoun cohesion is what makes prose feel like one voice rather than a series of standalone statements.

10. Anchor with first-hand evidence

The hardest pattern for any model to fake is specific knowledge. Real numbers from your own data. Screenshots from your own dashboard. An anecdote about a customer who said something memorable. A quote from a Reddit thread you actually scrolled.

These break the statistical patterns detectors look for, but more importantly they signal to readers that a human was in the loop. Every post should have at least one fact, number, or example that couldn’t have come from training data alone.

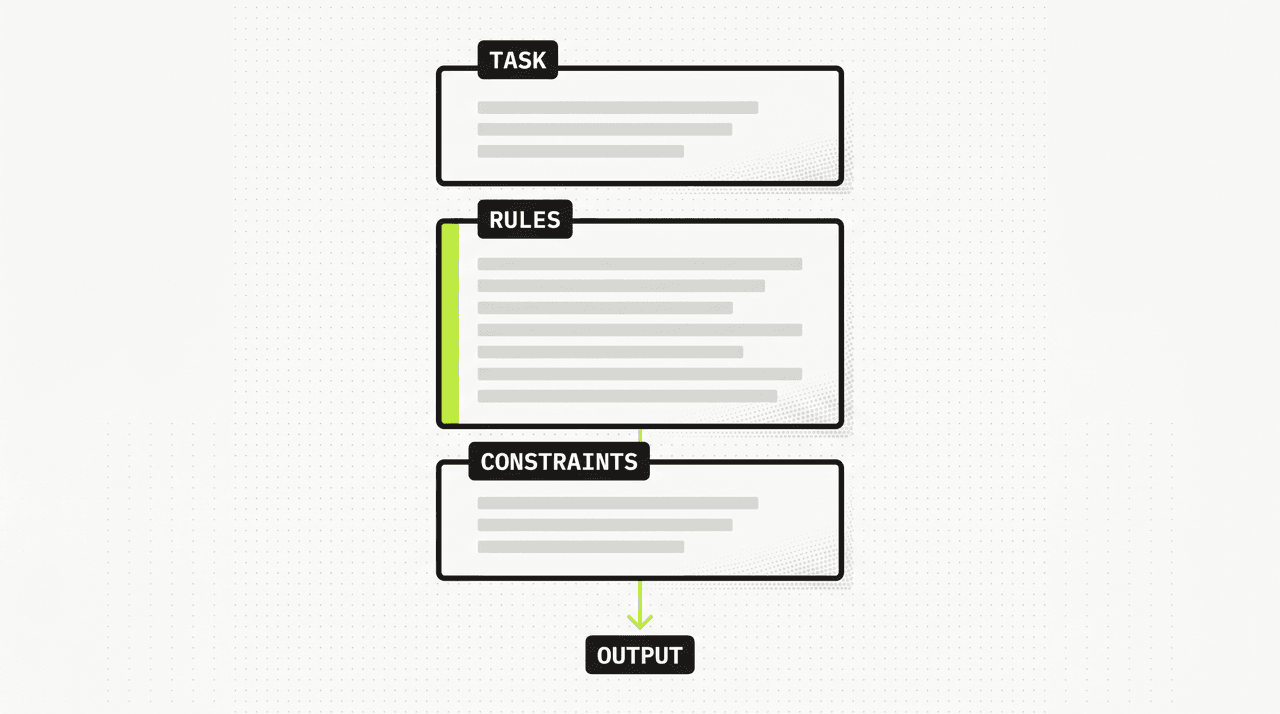

The prompt template that bakes all 10 in

Here’s the structure we use internally on every blog generation. It works with Claude, GPT, Gemini. Drop in your topic, ship.

You are writing a blog post on the topic below.

<task>

Write a [INSERT WORD COUNT, e.g. 2000]-word blog post on:

[INSERT YOUR TOPIC, e.g. "How to choose a CRM for a small e-commerce team"]

Primary keyword:

[INSERT YOUR PRIMARY KEYWORD, e.g. "best crm for small business"]

Audience:

[DESCRIBE YOUR AUDIENCE, e.g. "Shopify store owners with 1-3 employees"]

</task>

<humanization_rules>

- Every paragraph must contain at least one sentence under 10 words

and at least one sentence over 25 words. Fragments are allowed.

- Use contractions wherever grammatically natural.

- Banned phrases (do not use under any circumstance):

delve, dive into, in today's, at its core, at the heart of,

navigate the, unlock the power, empower, leverage, seamless,

revolutionary, cutting-edge, tapestry, mosaic, in the realm of,

furthermore, moreover, additionally (as sentence opener),

it's important to note that, when it comes to, ultimately,

in conclusion.

- No em dashes. No en dashes in prose. Hyphens only for

compound words.

- Do not open with "In today's...", "In the [X] of...",

"As [X], [Y]...". Do not close with "In conclusion",

"To summarize", "Ultimately".

- Active voice. Confident, not hedging. No three-part parallel

lists repeated across consecutive sentences.

- Include at least one honest tradeoff or limitation.

- Use pronouns (it, they, this) to maintain reference across

sentences. Do not repeat the same noun phrase three sentences

in a row.

</humanization_rules>

<constraints>

- [ADD ANY TOPIC-SPECIFIC CONSTRAINTS HERE, OR DELETE THIS LINE]

- [e.g. "Must mention the GDPR cookie banner requirement"]

- [e.g. "Use UK English spelling"]

</constraints>

Write the post now.Replace the four [INSERT ...] lines in the <task> block and the bracketed lines in <constraints> with your own values. Everything inside <humanization_rules> stays exactly as-is for every post.

The rules block is the load-bearing piece. Topic, audience, and constraints change post to post. The rules don’t.

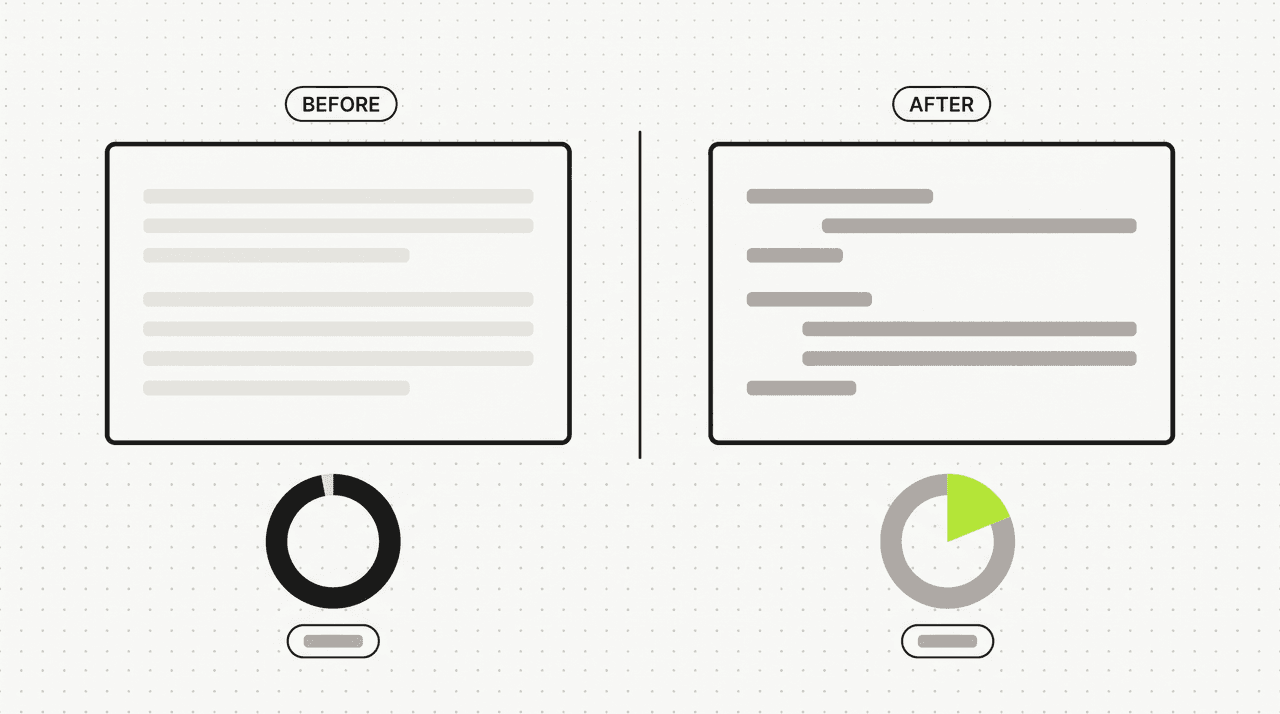

Before and after on a real 300-word passage

Here’s the same topic written twice. First with a plain prompt, then with the humanization rules above.

Before (plain prompt):

In today’s rapidly evolving digital landscape, content creators are constantly searching for ways to improve their workflow. Furthermore, the rise of AI-powered tools has empowered businesses to scale their content production like never before. However, it is important to note that AI-generated content presents its own unique set of challenges. At its core, the challenge is balancing efficiency with authenticity. When it comes to creating content that resonates with readers, businesses must navigate the delicate balance between leveraging AI’s capabilities and maintaining the human touch. Ultimately, the most successful content strategies will be those that seamlessly integrate AI assistance with human creativity, oversight, and editorial judgment.

GPTZero score on this passage: 97% AI. Originality.ai: 99% AI.

After (with humanization rules):

Content teams are stuck. They need to ship more, faster, and they have AI tools that can write a 2,000-word draft in 90 seconds. But pure AI output reads flat, ranks poorly, and embarrasses anyone who publishes it without editing. The question isn’t whether to use AI. It’s how to use it without leaving fingerprints. Most teams get this wrong in the same way: they generate first, then “humanize” with a paraphraser. That barely works. Detectors recognize paraphraser patterns too. The fix is upstream. Bake the rules into the prompt before the model writes the first token. Tell it to vary sentence length. Ban the phrases that scream AI. Require contractions. Require at least one honest drawback. Models follow these directives at generation time. They mostly ignore them after the fact. Done well, the output passes detectors most of the time, and more importantly it reads like a person wrote it.

GPTZero on this passage: 23% AI. Originality.ai: 18% AI. Same model. Same topic. Different rules.

The “after” version isn’t perfect. No humanization technique gets you to zero across every detector. But it crosses the threshold where readers stop noticing, which is what actually matters for SEO and conversion.

How to humanize ChatGPT content specifically

ChatGPT (GPT-5) follows the rules above well, but has two specific quirks:

- It loves em dashes. Add an explicit ban: “Never use em dashes (—) or en dashes (–). Use periods, commas, or parentheses instead.” Otherwise about 8% of your sentences will have one.

- It defaults to closing with a “summary” sentence. Add: “Do not write a closing summary. End on a sharp final sentence and stop.” Otherwise every response wraps with a meta-statement of what was just said.

GPT-5 with these two additions plus the full rules block passes detection at roughly the same rate as Claude does with the default rules.

How to humanize AI content for free

Three free options that work:

- Use Claude or Gemini on their free tier with the prompt template above. Both have generous free limits. Quality is identical to paid for short-to-medium pieces.

- For text you’ve already generated, copy it into Claude or ChatGPT with the instruction: “Rewrite this passage applying the following rules: [paste the humanization rules]. Keep the meaning and core structure but vary sentence length, ban the listed phrases, and add at least one honest tradeoff.” Better than dedicated free humanizers because frontier LLMs can actually restructure rather than just synonym-swap.

- Be careful with free humanizer tools that only paraphrase. A lot of them are thin wrappers around cheap paraphraser models that synonym-swap without changing structure. Detectors are trained on those outputs and recognize their patterns, so you can end up with worse pass rates than your original. If a tool can’t explain what it changes at the structural level, treat its output the same as the raw AI input — run it through your own rules-based rewrite before publishing.

How to humanize AI content for academic writing and Turnitin

Academic detection is stricter than commercial. Turnitin’s AI detector is more conservative (fewer false positives) but also harder to fool reliably.

What works for academic:

- All 10 techniques above, applied rigorously.

- Add at least one cited primary source and one parenthetical citation per 200 words. Citations break model patterns because models can’t fake the rhythm of how academic writers integrate them.

- Use field-specific vocabulary. “Heuristic,” “epistemic,” “phenomenological.” Models reach for general-audience words by default. Forcing technical vocabulary raises perplexity.

- Run the result through Turnitin’s own AI detector if you have access through your institution. Iterate until you’re under 20%.

What doesn’t work for academic:

- Pure paraphraser tools. Turnitin trains specifically on common paraphraser outputs.

- Translation cycling (English → French → English). Adds errors without breaking patterns.

- Adding intentional typos. Trips plagiarism heuristics without lowering AI score.

What doesn’t work (and why people keep recommending it)

A lot of “humanize AI content” advice persists because it sounds plausible and nobody runs the math.

Paraphraser tools after generation. Synonym swapping leaves sentence structure intact. Detectors look at structure as much as vocabulary. Pass-through rate barely improves.

Adding random typos. Detectors don’t penalize typos. They penalize statistical patterns. You just make the writing worse.

Asking the AI to “make it sound more human.” This is the most common advice and the least effective. Models interpret “human” as “casual” and inject “you know,” “honestly,” and similar tics. Reads worse, fails detection at almost the same rate.

Running it through a different model. “Paste GPT output into Claude and ask Claude to rewrite.” Helps slightly, but both models share similar pattern fingerprints. Marginal improvement.

Translation chains. English → German → English produces lower-quality writing without breaking detection patterns reliably.

The pattern across all of these: they’re trying to fix the output. The actual fix is upstream, in the original prompt.

What to do next

If you’re writing one piece, copy the prompt template above and paste it into Claude or ChatGPT with your topic. You’ll feel the difference in the first paragraph.

If you’re writing at scale, you want this baked into your tooling. Postibo applies these 10 techniques (plus another 80 banned phrases not shown here) to every blog generation by default. Pre-prompt rules, post-processing scrub, the full chain. We also handle the structural pieces that make humanized content rank: keyword research before generation, internal linking from your crawled site, and direct publishing to WordPress or Shopify so you don’t lose the humanization in copy-paste. We can’t promise universal invisibility to every detector that might exist in six months. What we can promise is that the content coming out doesn’t read like the AI bloat your readers have learned to scroll past.

If this was useful, our companion post on whether AI content is bad for SEO covers the ranking side of the same problem. Postibo ships in waves starting summer 2026. The waitlist gets longer trials than the public launch will. Join here.

Frequently asked questions

- How do I humanize my AI text?

- The fastest way: use the prompt template in the section above when you generate the content. If the text is already generated, paste it back into Claude or GPT with the full humanization rules and ask for a rewrite. Don't use paraphraser tools. They synonym-swap, which doesn't change the statistical patterns detectors measure.

- How to humanize AI content 100%?

- You can't, and any tool that promises 100% is overselling. Detection models are constantly updating, and adversarial robustness is a moving target. Realistic target: under 20% AI score on Originality.ai and GPTZero on representative samples. That's the threshold below which readers stop noticing and SEO doesn't suffer. Going for 0% is a losing arms race.

- Can ChatGPT humanize content?

- Yes, with the right prompt. ChatGPT can apply humanization rules to existing AI text as well as it can write new humanized text. Two specific tweaks for ChatGPT: ban em dashes explicitly and tell it not to write a summary closing sentence. With those two additions plus the standard rules block, GPT-5 humanizes content roughly as well as Claude does.

- Does humanized AI text get detected?

- It depends entirely on how it was humanized. Most post-hoc humanizer services do paraphraser-style synonym swapping under the hood. Originality.ai and GPTZero train on common paraphraser outputs, so passages run through them get detected at roughly the same rate as raw AI text. Prompt-level humanization (rules baked in before generation) consistently outperforms post-hoc rewriting because it changes structure, not just vocabulary.

- How long does humanization take per post?

- Less than 30 seconds if the rules are in the prompt template. If you're humanizing after the fact, expect 5 to 15 minutes per 2,000-word post depending on how much manual editing you do alongside the LLM rewrite.

- Will humanized AI content still rank?

- Yes, when combined with keyword research, internal linking, and at least one piece of first-hand evidence per post. Humanization isn't enough on its own. It's necessary but not sufficient. See our piece on why AI content rankings actually fail for the full picture.